ロンドン大学で MSc Computer Science: Cloud computing モジュールを履修中。

講義内容に関して記録した個人的なスタディノートです。

全 12 週のうち 1〜5 週目の内容を記録します。(1 週目開始:2024 年 1 月 8 日 / 5 週目終了:2024 年 2 月 11 日)

モジュール概要 #

Aims

The module introduces the concepts of distributed computing systems, scalable infrastructures as well as development and configuration of complex large scale applications and systems. Students learn how to develop and deploy modern applications on cloud platforms, such as in Google Cloud Platform and Amazon EC2. Cloud Computing introduces a variety of modern tools and technologies including the use of virtual machines and containers, the configuration of distributed systems, deployment and understanding of operations of NoSQL systems, development of RESTFul services with Python, understanding of DevOps practices and infrastructure as a code and use of big data processing systems such as Hadoop MapReduce and Apache Spark.

Weeks

講義は Week 10 まで。Week 11, 12 は最終課題を作成する期間。

- Week1: Introduction to cloud computing

- Week2: Cloud services and virtualisation

- Week3: Introduction to cloud web services

- Week4: Developing software as a services (SaaS) applications using REST

- Week5: Introduction to application containerisation

- Week6: Introduction to DevOps and use of containers for fast application development

- Week7: Introduction to distributed computing principles

- Week8: Introduction to distributed database systems

- Week9: Introduction to distributed and big data systems (Part 1)

- Week10: Introduction to distributed and big data systems (Part 2)

参考文書 #

Essential reading

- Nednur, A. R. ‘Introduction to cloud computing’. (California: O’Reilly Media Incorporated 2021).

- Newman, S. ‘Building microservices’. (California: O’Reilly Media Incorporated, 2021) 2nd Edition.

Week 1: Introduction to cloud computing #

メモ

- 既知の内容がほとんどだったため、講義内容の記録は簡略化する。

- 前半パートはクラウドサービスに関する導入的な内容だった。クラウドの利点や、クラウドサービスで用いられる各種用語の説明など。

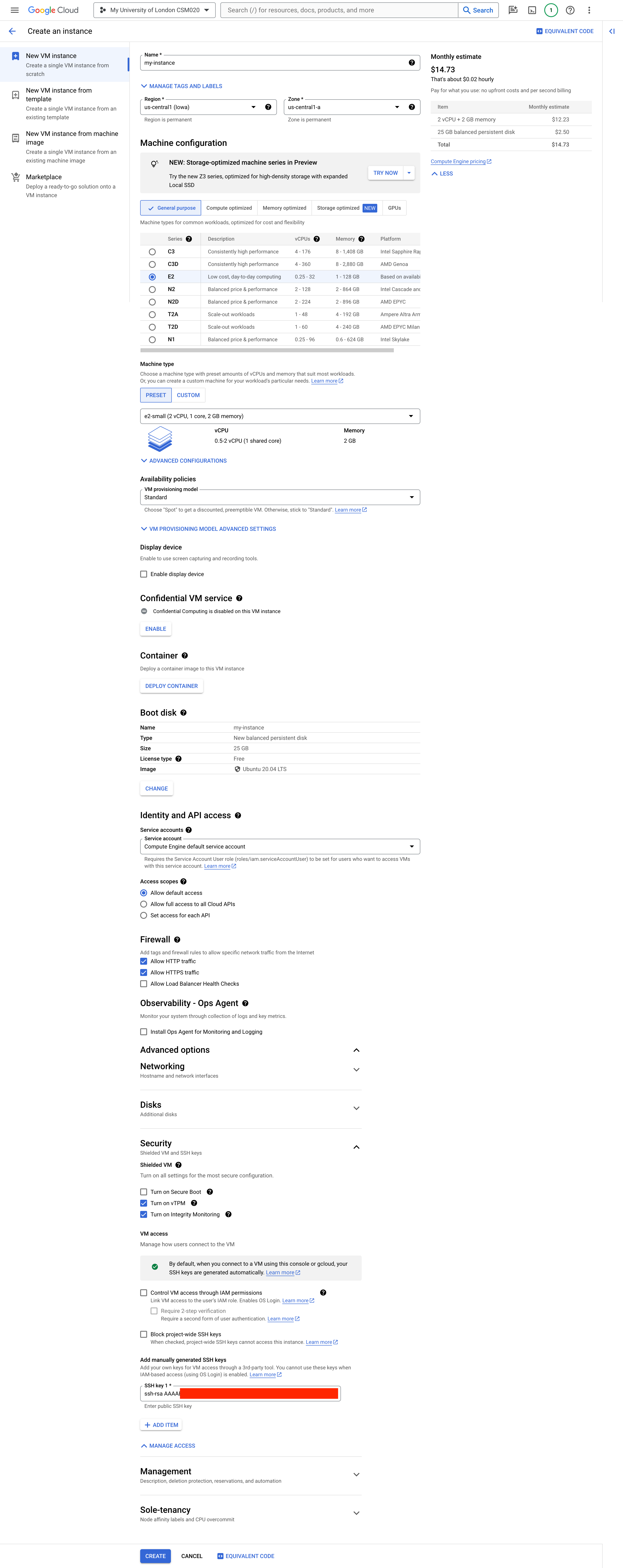

- 後半パートは GCP の Compute Engine のインスタンスを作成して、ローカルの VS Code から接続し、ファイルを追加・編集する方法についてのハンズオン。

- ラボの内容は、Apache ウェブサーバをインストールして、HTML ファイルを公開するまでのハンズオン。

レクチャー内容

- Introduction to Cloud

- Lecture 1: Let’s explore some facts about cloud computing

- Lecture 2: The cloud need

- Lecture 3: What is cloud provision and deprovision?

- Lecture 4: What is virtualisation?

- Lecture 5: What are the cloud deployment models?

- Introduction to the Google Cloud Platform (GCP)

- Lecture 6: Introduction to Google Cloud Platform (GCP) services and virtual machines

- Lecture 7: Connecting GCP with Visual Studio Code

- Labs

- Lecture 8: Introduction to Linux

- Lecture 9: Using Linux for user management

- Lecture 10: Create a virtual machine and install software

講義中での Compute Engine の設定例

Week 2: Cloud services and virtualisation #

メモ

- 既知の内容も多かったため、講義内容の記録は簡略化する。

- 前半パートは主にクラウドサービスで使われている技術、特にハイパーバイザーや仮想化についての説明。

- 後半パートはウェブサービス(主にウェブ API に着目)がどのような技術スタックで提供されているかの概要説明。

- ラボの内容はローカル環境に Node.js をインストールして、Express.js にて簡易的なウェブ API を作成し MongoDB(クラウドサービス版の MongoDB Atlas)に接続するまでのハンズオン。

レクチャー内容

- Virtualisation for cloud computing

- Lecture 1: Dive into virtualisation for cloud deployments

- Lecture 2: Introduction to hypervisors and hypervisor types

- Lecture 3: How VMs are created?

- Lecture 4: What IT industries run on a cloud?

- Introduction to building APIs with Node.js

- Lecture 5: Webservice advantages

- Lecture 6: Exploring NodeJS

- Labs

- Lab 1: NodeJS setup

- Lab 2: Building the MiniFilm application using MongoDB and NodeJS

Hypervisor

A hypervisor is a software, specialized firmware, or both which allow physical hardware to be shared across multiple virtual machines.

- Host machine: A computer on which a hypervisor runs one or more virtual machines

- Guest machine: A virtual machine

- Type 1: Hypervisor that sits on top of the bare metal.

- Also known as: Native/Bare-Metal Embedded Hypervisors

- Stack: Hardware -> Hypervisor -> Virtual machines

- Examples: Citrix/Xen Server, VMware ESXi and Microsoft Hyper-V

- Type 2: Hypervisor that sits on top of an operating system

- Also known as: hosted Hypervisors

- Stack: Hardware -> operating system -> Hypervisor -> Virtual machines

- Examples: QEMU, VirtualBox, VMWare Plaver

- Type 1 has higher performance: lower resource usage, firmware location is more secure.

- Type 2 is simpler to set up: easier to manage, does not require a dedicated admin.

Oracle VirtualBox is free and easy to use type 2 hypervisor.

Linux kernel

The Linux kernel is the main component of a Linux operating system(OS) and is the core interface between a computer’s hardware and its processes. It communicates between the two, managing resources as efficiently as possible.

The kernel has 4 jobs:

- Memory management: Keep track of how much memory is used to store what, and where.

- Process management: determine which processes can use the central processing unit(CPU), when and for how long.

- Device drivers: Act as mediator/interpreter between the hardware nad processes.

- System calls and security: Receive requests for service from the processes.

Virtualisation

- Full-virtualisation

- A host operating system runs directly on the hardware while a guest operating system runs on the virtual machine.

- VMWare ESXi, Microsoft Virtual Server.

- Para-virtualisation

- The hypervisor is installed on the device and the guest OS are installed into the environment. Kernel guests can communicate with the hypervisor.

- IBM’s VM operating system has offered such a facility since 1972.

Full-virtualisation Para-virtualisation

[Guest Kernel] [Modified Guest Kernel]

| |

| [Hypervisor] v

| | [Hypervisor]

| | |

v v v

[Hardware] [Hardware]

Week 3: Introduction to cloud web services #

メモ

- 既知の内容がほとんどだったため、講義内容の記録は簡略化する。

- 前半パートは ウェブ API の概要やその設計思想、例えばモノシリックではなくそれぞれが分離されたマイクロサービス志向であるなどについての説明や、ウェブ API の運営に使用されることの多い技術(負荷分散やオートスケーリングなど)の説明。

- 後半パートはウェブ API の設計でよく用いられている REST(RESTful API)についての概要説明。

- ラボの内容はローカル環境の Express.js にて、REST API 設計に沿って MongoDB への CRUD 操作を行う簡易な API を作成するハンズオン。

レクチャー内容

- Introduction to cloud microservices

- Lecture 1: SaaS and microservices

- Lecture 2: Microservices principles: Offerings

- Lecture 3: Microservice ecosystem

- Lecture 4: Autoscaling and scalability

- REST APIs with Node.js

- Lecture 5: REST architectural style

- Lecture 6: RESTFull principles

- Labs

- Lab 1: Building the MiniPost REST microservice

Further material #

- Intro to MongoDB Atlas in 10 mins | Jumpstart - YouTube

- What is a REST API? | RedHat

- What is a REST API? | IBM

Week 4: Developing software as a services (SaaS) applications using REST #

メモ

- 既知の内容も多かったため、講義内容の記録は簡略化する。

- 前半パートは分散システムについての説明や、直列(Sequential)、並行(Concurrent)、並列(Parallel)処理についての説明。

- 後半パートは同期処理と非同期処理についての説明。

- ラボの内容はローカル環境の Express.js にて、メールアドレス&パスワードログイン機能および、ログイン後の JWT(JSON Web Tokens)認証によるアクセス制御ができる REST API の作成のハンズオン。

レクチャー内容

- Introduction to SaaS using REST

- Lecture 1: Distributed Systems – Introduction

- Lecture 2: Distributed systems technology

- Lecture 3: Distributed versus non-distributed systems

- Lecture 4: Synchronous versus asynchronous

- Labs

- Lab 1: MiniFilm REST Verification and Authentication

Distributed Systems

A distributed computing system is a system whose components are located on different networked computers, which communicate and coordinate their actions by passing messages to one another.

Distributed computing arises when one must solve problem in terms of distributed entities (usually called processors, nodes, processes etc.) such that each entity has only a partial knowledge of the many parameters involved in the problem that has to be solved.

Essentially, distributed computing is the idea of having small computers that they talk to each other through the network. However, they do not share disks or memories.

- Why do we need distributed systems?

- Power wall, latency wall and data trends indicate that the era of single thread performance improvement through Moore’s law(ムーアの法則) is ending.

- More transistors on a chip are now applied to increase system throughput (the total number of instructions executed) but not latency (the time for a job to finish).

Further material #

- What is OAuth 2.0 and what does it do for you? - Auth0

- What is OAuth really all about - Java Brains - YouTube

Week 5: Introduction to application containerisation #

メモ

- 既知の内容がほとんどだったため、講義内容の記録は簡略化する。

- 講義内容は開発モデル(ウォーターフォールやアジャイル開発)や DevOps についてと、Docker を用いたアプリケーションのコンテナ化についての説明。

- ラボの内容は Docker の使い方や Dockerfile の書き方について、GitHub にリポジトリを作成してコードをプッシュするまでの手順について。そして GCP Compute Engine のインスタンス内で GitHub リポジトリをクローン、さらに Dockerfile からクローンしたコードを実行する形でのアプリのデプロイのハンズオン。

レクチャー内容

- Building REST applications

- Lecture 1: Software development models

- Lecture 2: DevOps – from your laptop to production

- Lecture 3: Introduction to the Docker platform

- Labs

- Lab 1: Introduction to Docker

- Lab 2: Pushing code to GitHub

- Lab 3: Pushing code to GitHub and Docker

Further material #

- What is Docker? - .NET | Microsoft Learn